Adversary emulation has been a really popular activity in organisations lately. It detects security holes in the organisation’s network and is generally a fun activity to do. In this post, we will look at a blend of EDR tests, adversary emulation, and enhancing threat detection.

UniCon 2023

Just a heads up, in case you prefer watching/listening to reading. I presented a very similar version of this blog post at Scythe’s UniCon 2023. You can watch “The Blurred Lines of Red and Blue: EDR Tests and Adversary Emulation” here, enjoy!

In the case that you do prefer reading, please continue reading the post!

Threat Detection

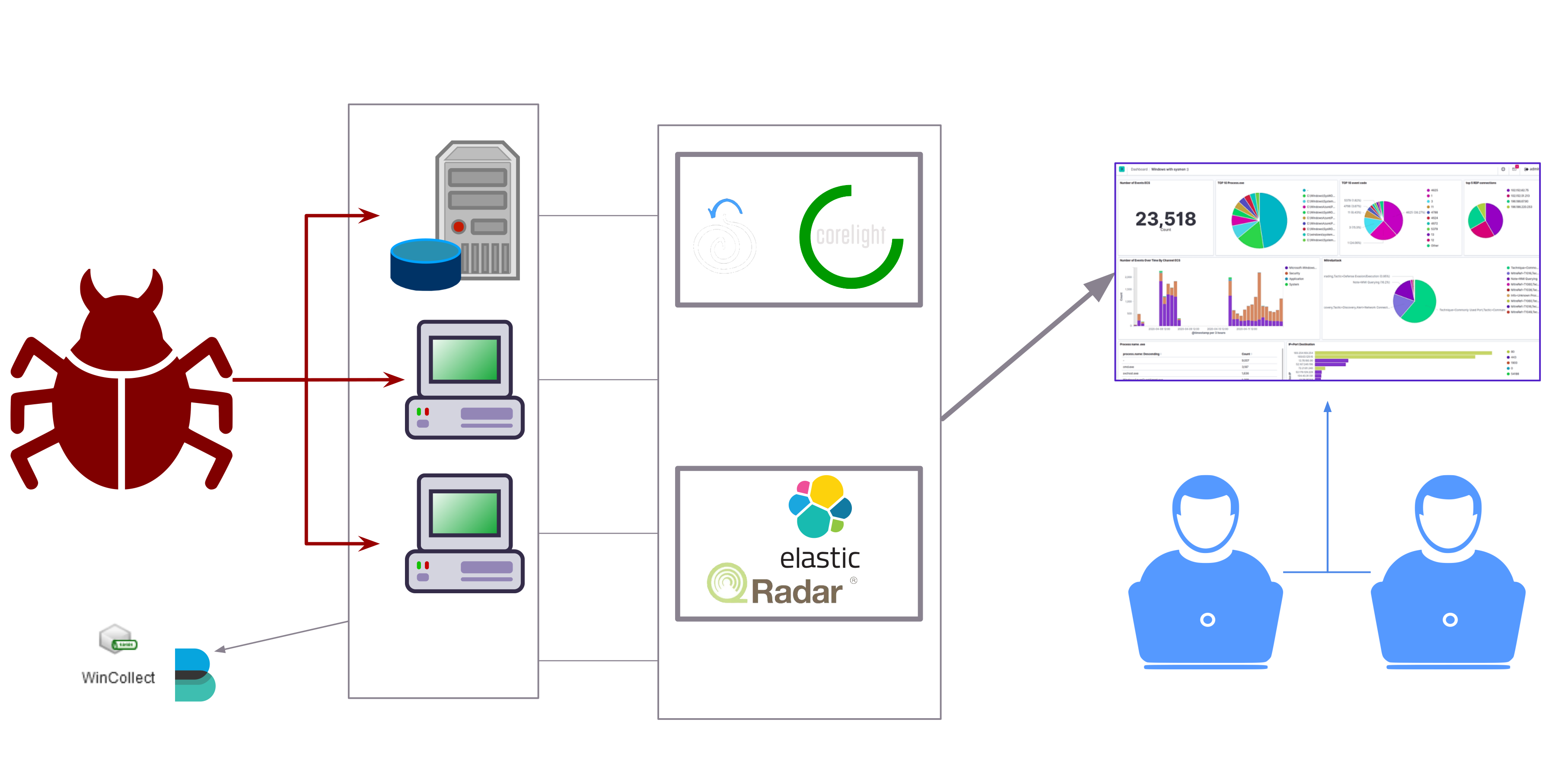

At the bare minimum, threat detection in an organisation is done via data collection from hosts and the network. The data (which includes logs, metrics, etc…) is then forwarded to a SIEM solution which is then monitored by SOC analysts for any alerts. Most organisations also use Network Security Monitoring (NSM) solutions.

Of course, more mature organisations have different (and probably more advanced) setups but the base stays relatively the same.

Adversary Emulation

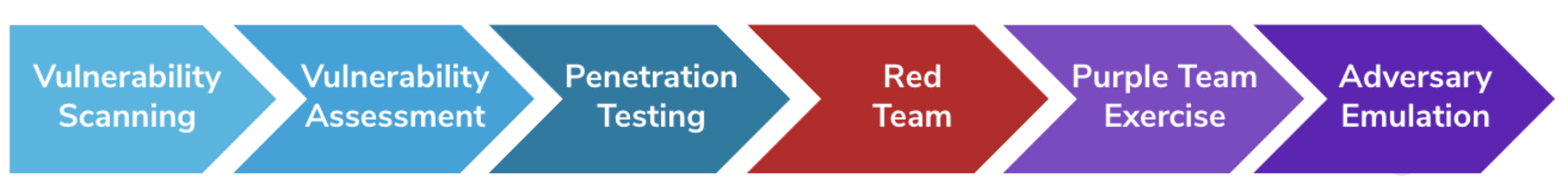

Adversary emulation is the most mature stage of “ethical hacking”, this is according to SCYTHE’s Ethical Hacking Maturity Model. It is often performed as a purple team activity, meaning it involves both the red and blue teams of an organisation. Adversary emulation can be done in two ways: either running the actual malware in an isolated environment that is very close in setup and structure to the real environment of the organisation or running emulations of the actual attacks, using the latest and greatest techniques from APT groups.

SCYTHE’s Ethical Hacking Maturity Model

Adversary emulation can be used to assess how well the organisation can withstand attacks, and can also be used to create detection use cases.

In this post, we’ll assume that the organisation will go for the actual malware execution method of adversary emulation. The organisation has a network that is very similar to their real one and they run their attacks there to see how it holds up.

Typical adversary emulation setup

In our case, the red team would be running the attacks and the blue team would then detect these attacks and create detection use cases for them. However, it would typically be a mix of both. Both teams would work together as a virtual team where the blue team could execute some of the attacks or maybe the red team could give insights on creating the detection use cases. This kind of activity really blurs the line between the blue and red team roles!

Now we have a general idea of what adversary emulation is and how threat detection works. Now let’s take a look at how detection use cases are created.

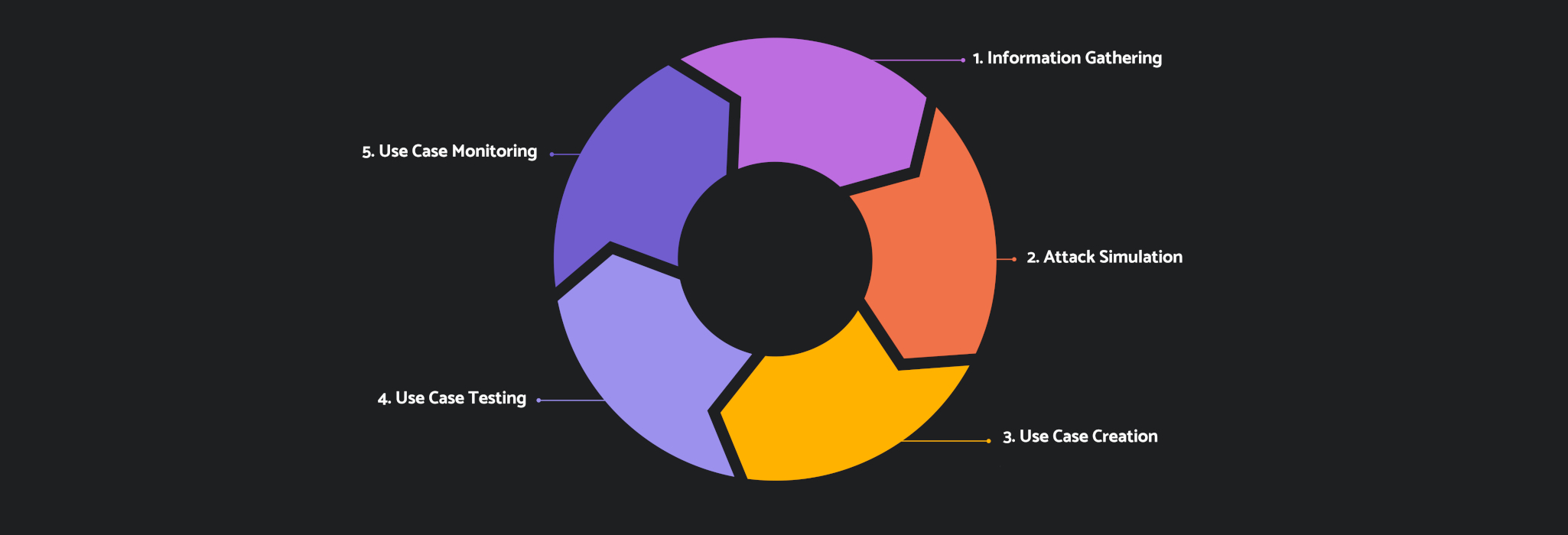

Detection Use Case Development Lifecycle

The detection use case development lifecycle

1. Information Gathering

In the first phase, the team would typically look for new TTPs, detection bypasses, and any other form of attacks on the internet and threat intel sources. Don’t underestimate any online source, you can find a really effective attack that your detection use case library doesn’t cover on some obscure blog from 2012. Maybe you’ll find a fresh bypass on Twitter. It is useful to keep looking.

2. Attack Simulation

After you’ve decided on the new attacks you’re going to test, you will now run them! Whether you’ve gone for the isolated emulation network or the real organisation’s network route, you should make sure that all of your data shippers and detection software are running properly. This activity in general will assess their capabilities. In this stage, the attacks can be automated or even just documented using tools like CALDERA, Atomic Red Team, and SCYTHE. These platforms are different and have different features. I’d recommend looking at their overviews and comparisons between them before using them!

3. Use Case Creation

The attacks have now been executed and have left some artefacts on the system and inside the network. It is now time to create detection logic and write your use cases. A common and very effective format used to write detection use cases is sigma rules. Data sources used for these detections include Sysmon, native OS logs, Auditd for Linux, EDR Logs, and pretty much any logging source you want to use! You can even use something like Procmon to get a general idea of what was modified throughout the simulation.

Example: Noticing the addition of a registry key some way, then creating a rule for it based on Sysmon event ID 12, Windows event ID 4657, or an EDR event(if existent).

Windows Registry auditing requires enabling the Object Access Audit Policy and is often very noisy. Be careful whenever using it and make sure to always scope the auditing properly using the group policy editor and SACLs.

4. Use Case Testing

After the use cases have been written and have been implemented on the SIEM, it is now time to execute these attacks again and test the effectiveness of the use cases.

5. Use Case Monitoring

The detection rules must’ve (or at least should’ve) fired now that you have run the attacks again after implementing the rules. It is now a great opportunity to monitor for any false positives that are matching the rule or any missed detections. You should also check if the use case correlates with other use cases. For example, a specific adversary could be using a specific technique for persistence which you already have a use case for. In this case, the detections overlap and should be examined more closely. In later iterations of this phase, older attacks could be emulated again to see if the detections are still working.

Then we cycle back to stage one and so on.

Now What?

Our threat detection maturity so far is pretty decent, we are:

- Doing adversary emulation to see how well our network stands to the latest and greatest attacks

- Constantly developing use cases to enhance our detection capabilities

- (just now!) bought a new EDR that has a few good detection and prevention rules out of the box

Hmm… How can we take this a step further?

Oh, let’s test the EDR! EDRs are great tools for detecting and preventing attacks. Of course, no EDR is perfect. That’s why it is a great tool to decide on which new use cases should be implemented and tested. EDRs serve the detection use case development lifecycle heavily and I’ll demonstrate how it does that in each phase.

Let’s try this: Get a set of attacks, run them in the emulation environment against your EDR, record down what gets prevented and what is detected… Then, see whatever the EDR missed and create use cases for it! What if the EDR does detect/prevent your attack? Get creative! Find ways to bypass the EDR. It is genuinely fun. But hey, let’s not get too ahead of ourselves. What even are EDR tests?

EDR Tests

EDR tests are done usually to perform detection gap analysis, where you try to find where all the gaps in your detection capabilities are. Sometimes, it is also done for clients of MSSPs when the client is trying to choose which EDR to buy. Most of the time EDR vendors will claim that their product is the one you should buy. However, no EDR works for everyone the same way. That’s why the EDR should be tested first and most vendors will give potential clients demos of their products and trial periods.

An EDR test would be performed with a specific set of attacks that usually change over time depending on the detection capabilities of EDRs. These attacks are usually run from a C2 agent. Each attack’s result is recorded, so that detection gaps are analysed later on.

Typically, EDR results would be presented this way:

| EDR A 🔨 | EDR B 🏰 | EDR C 🌐 | |

|---|---|---|---|

| Obfuscated Keylogger | Prevented | Detected | Bypassed |

| fodhelper.exe Privilege Escalation | Prevented | Bypassed | Detected |

| AMSI Bypass | Detected | Bypassed | Prevented |

Each EDR would then be given a detection and prevention efficiency:

- Prevention Efficiency = #Prevented Attacks / #Detected Attacks

- Detection Efficiency = #Detected Attacks / Total #Attacks

Not just these, the EDR is also tested for other features such as threat intel integration, UI/UX, rate of false positives, and a lot more! However, we won’t get into these as they are out of the scope of this post.

Some Interesting Results

As non-vendor-specific examples, here are some interesting results from previous EDR tests I’ve performed which lead to the change of the attack library.

- A specific EDR would only detect a PowerShell keylogger if it logged the keystrokes to a file which had the word “keylogger” in its name

- No EDR was able to catch SMTP exfiltration through PowerShell

- Only one EDR out of eight tested was able to detect a specific method of VSS Shadow Copy deletion

It is worth noting that these results are probably old by now and EDR vendors have more than likely changed these results. When performing these tests, results would differ greatly from year to year and not always changing for the better! Talk about the benefits of competition.

I will not ever share who these vendors were and which product it was, please do not ask. If you’ve encountered anything similar please do not share which vendor it was. It isn’t cool. Disclose your bypass properly if needed instead of calling a vendor out.

Gaps such as these make it much easier to know what kind of attacks real threat actors may be using and therefore create detection use cases for them.

EDRs are extremely impressive pieces of technology and are very effective when tuned and used properly. I am in no way downplaying their importance or the effort of EDR vendors.

Detection Enhancement using EDR Tests

Now let’s take a look at how all of this fits together!

Information Gathering Enhancement

The failed detections of the EDR can be used to create use cases which fill these detection gaps.

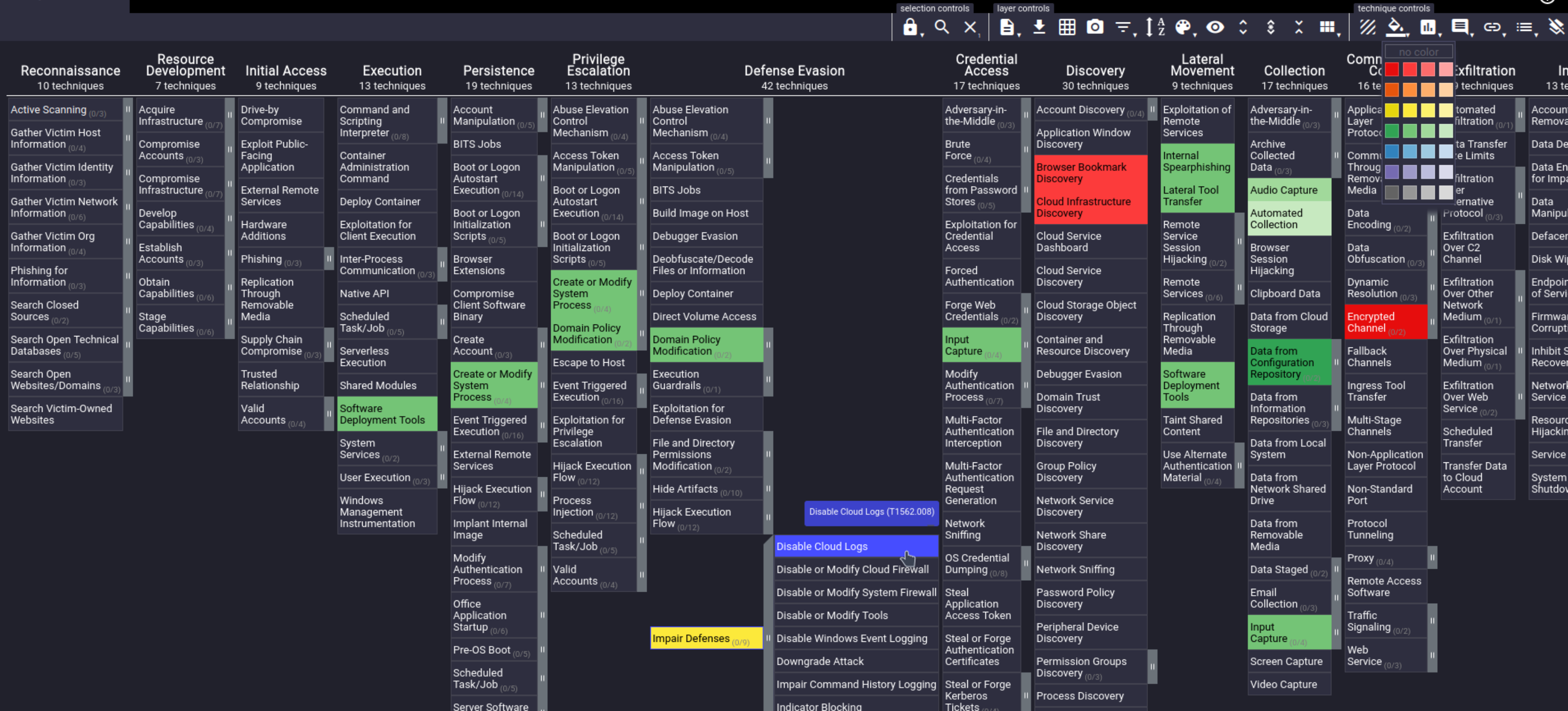

It is also a good idea to map your detection capabilities to MITRE ATT&CK Navigator. Navigator is a very useful tool which can be used to visualise and map ATT&CK matrices.

Attack Simulation Enhancement

If the EDR did detect/prevent the attack, it becomes even more interesting because now you have the motive to research ways to bypass it until you succeed. After you succeed, you can now make a detection use case for your bypass.

Use Case Creation and Monitoring Enhancement

An EDR may provide more advanced logging capabilities which can be used and compared with other logging capabilities that are built into OSs and systems inside the organisation. Of course, as mentioned before, trying new attacks helps with finding new false positives and missed detections which enhance use case monitoring greatly.

Conclusion

EDR tests are a great way to find new detection use cases to make, and can also be used to find detection gaps in the organisation. The detection use case development lifecycle can be greatly enhanced when paired with effective EDR tests. EDRs themselves offer very decent capabilities which can improve detection and prevention and strengthen the security posture of the organisation if tuned properly.

I haven’t written anything strictly security-related in quite some time. I hope you enjoyed this one and learned something new from it. Share this with your friends that love bypassing EDRs for fun!

And as always, thanks for reading.